High-Quality AI Lip Syncing Is Here

ByteDance has launched LatentSync, an impressive open-source model for video lip sync. It’s built using advanced and state of the art audio-conditioned latent diffusion models.

In simple terms, you can upload a video of someone talking along with a separate audio file. The AI replaces the original audio with the new one and adjusts the speaker’s lip movements to match the new audio perfectly.

The result is a highly realistic, though slightly eerie, deepfake video.

What is LatentSync?

The LatentSync framework leverages Stable Diffusion to directly model complex audio-visual correlations. However, diffusion-based lip-sync methods often struggle with maintaining temporal consistency due to frame-by-frame variations in the diffusion process.

To tackle this issue, researchers developed Temporal REPresentation Alignment (TREPA), a technique that enhances temporal consistency without compromising lip-sync accuracy. TREPA works by aligning generated frames with ground truth frames using temporal representations derived from large-scale self-supervised video models.

LatentSync incorporates Whisper to convert melspectrograms into audio embeddings, which are integrated into the U-Net via cross-attention layers. The U-Net’s input includes a combination of reference frames, masked frames, and noised latents.

How to generate lip synced videos

What you need is a video and an audio as input. If you don’t have an existing video, you can still generate one using AI tools.

Say for example you are aiming to create a promotional video of a beautiful young model, you can first create an image of them using Nectar AI then turn that image into video using Runway Gen-3 Alpha.

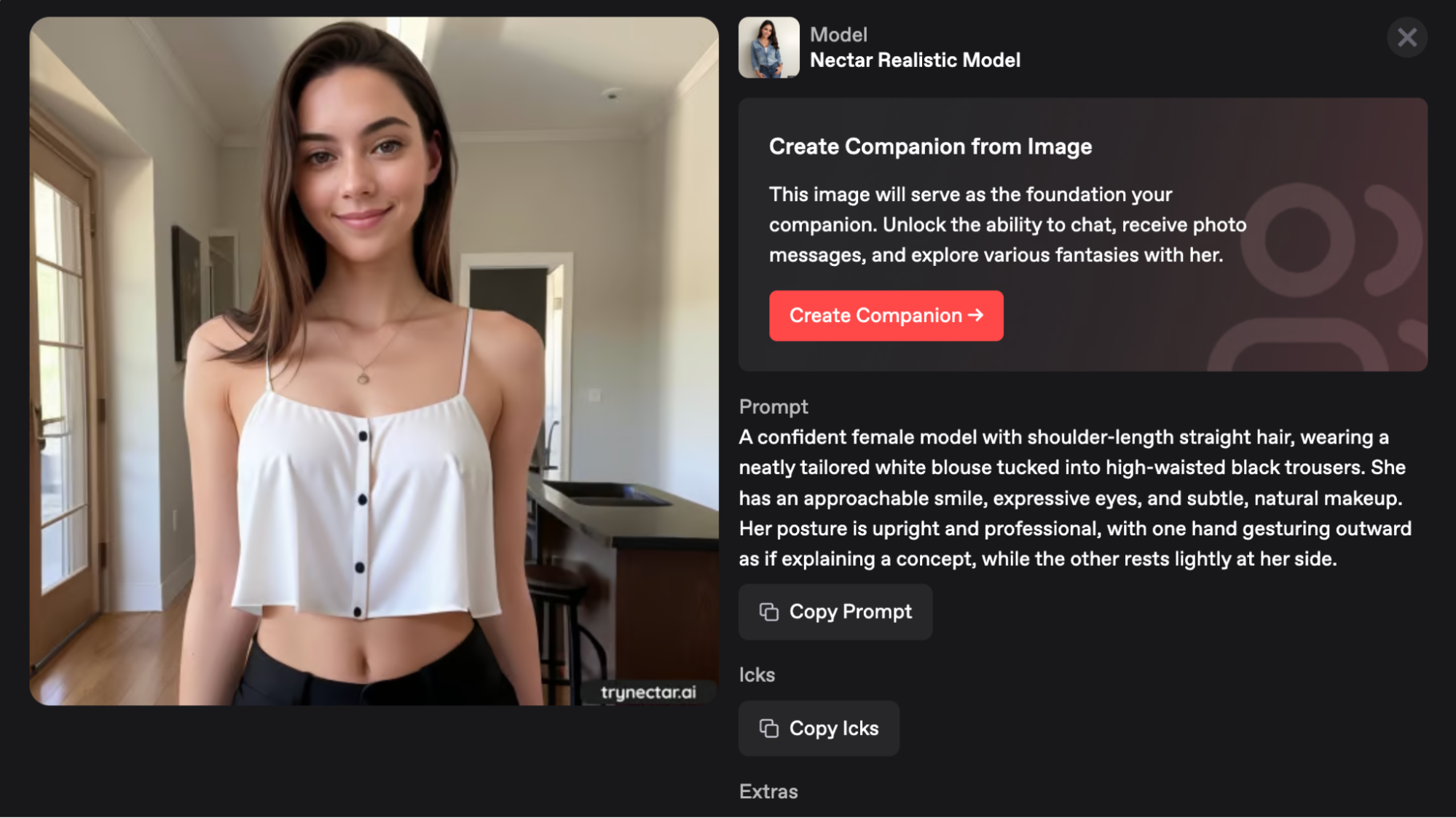

Here’s an example AI female model generated using Nectar AI:

Prompt: A confident female model with shoulder-length straight hair, wearing a neatly tailored white blouse tucked into high-waisted black trousers. She has an approachable smile, expressive eyes, and subtle, natural makeup. Her posture is upright and professional, with one hand gesturing outward as if explaining a concept, while the other rests lightly at her side.

You could also use other popular image generator platforms like Midjourney or Leonardo AI, but Nectar AI has the advantage for this specific use case because of its fine-tuned image models for attractive female characters.

The platform is also designed to give you full control of how your character looks like. Nectar AI is equipped with over 300 customization controls and various image styling to help you generate your perfect AI girlfriend.

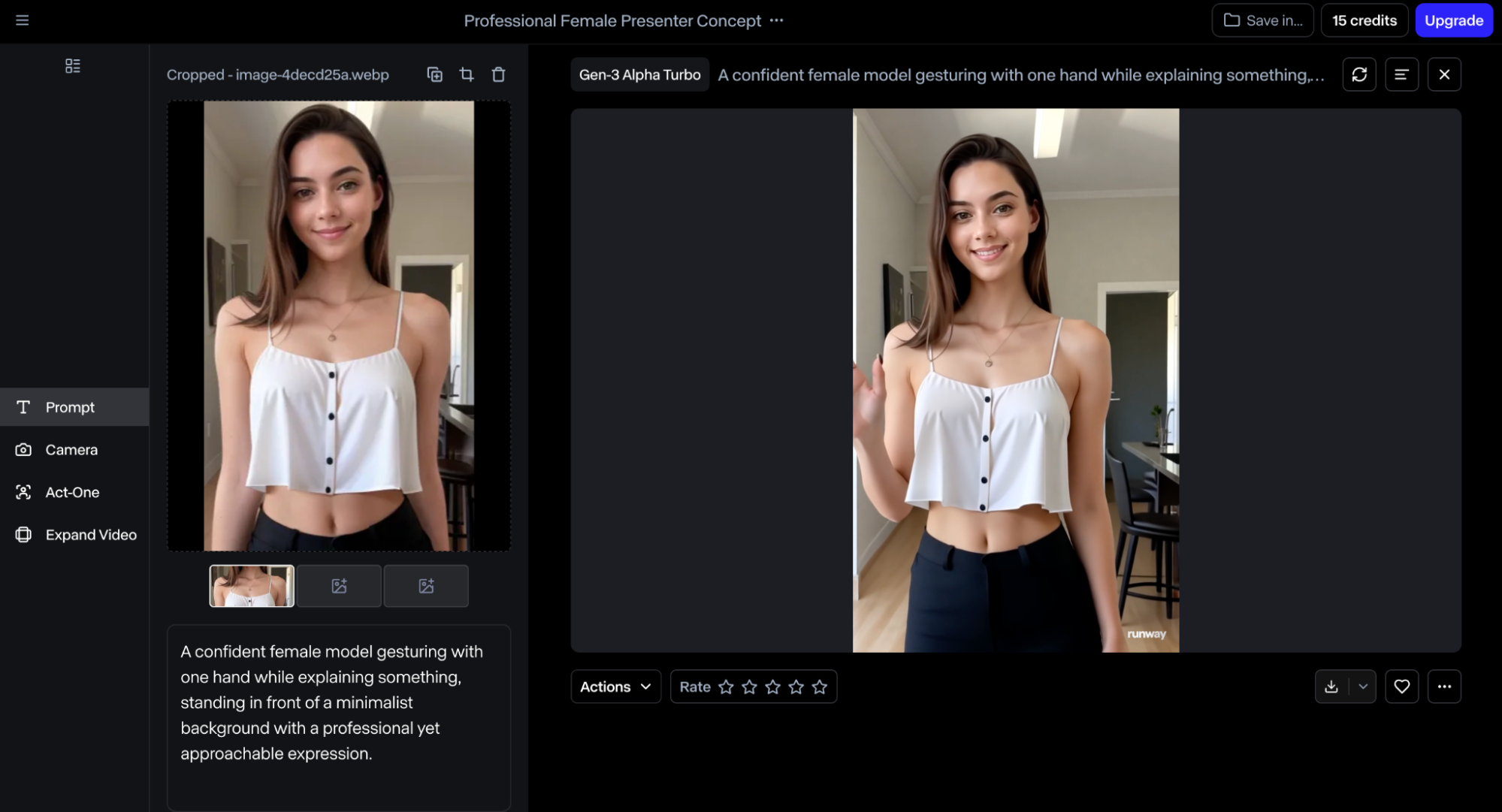

Download the image to your local disk and head over to Runway Gen-3 Alpha to turn it into a video. You can use the prompt below to guide the AI on what the final video should look like:

Prompt: A confident female model gesturing with one hand while explaining something, standing in front of a minimalist background with a professional yet approachable expression.

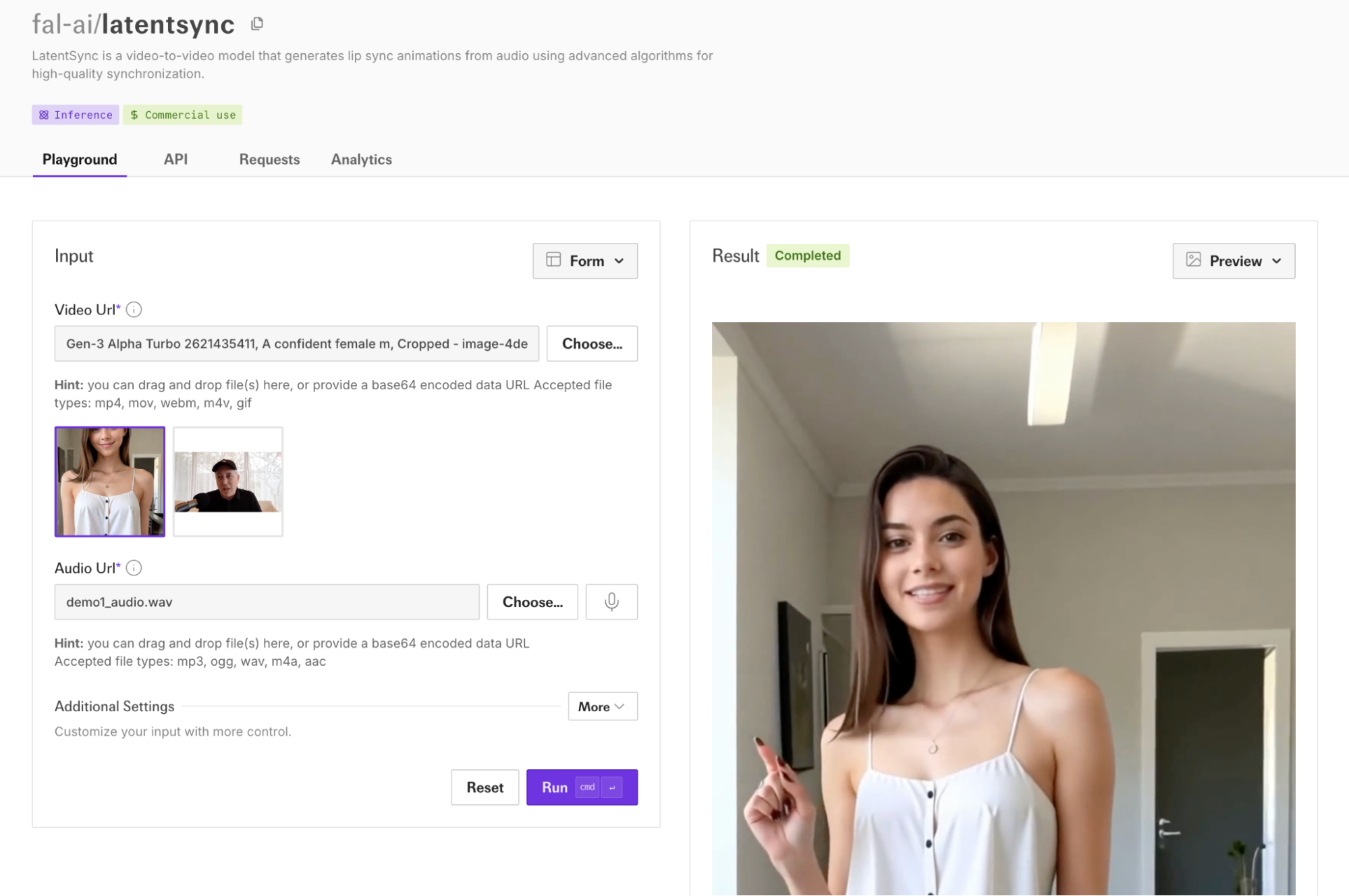

Great. Download the video file to your local disk and head over to Fal.ai to start working on the lipsynced video. In the explore page, look for the LatentSync model and open the playground dashboard. In the video input field, upload the recently generated video with Runway Gen-3 Alpha.

Finally, click on the Run button and wait for the lipsynced video file to be generated.

That’s super cool. The generation speed is fast, the example above took less than 3 minutes and the video file size is less than 2 MB for a 10-second video.

Here’s a preview of what the final video looks like:

You can download the video and use it to promote your brand or products on social media platforms like TikTok, Reels, or YouTube shorts.

Now let’s talk about the potential use cases of this tech.

Language Localization in Media

This tool is has a huge potential for dubbing movies, TV shows, or online videos. Instead of relying on subtitles or misaligned dubbing, LatentSync can create lip-synced translations that feel natural and engaging, significantly enhancing the viewing experience for international audiences.

Education and E-Learning

AI lip-syncing can personalize educational content. Educators can create videos in multiple languages without losing the visual fidelity of a single presenter. This approach can make global educational resources more inclusive and accessible.

Entertainment and Film Production

LatentSync could drastically reduce the cost and time of reshoots in filmmaking. For instance, if a scene requires a dialogue change or translation for international audiences, LatentSync can alter the actor’s lip movements to match the new dialogue without requiring reshoots or CGI adjustments.

Sure all these are great to hear and very exciting. But it’s equally important to balance innovation with responsibility. The rise of AI-driven deepfake technologies like LatentSync brings inevitable ethical dilemmas.

The ease of creating hyper-realistic lip-synced videos could lead to misuse in spreading fake news, propaganda, or misleading advertisements. Safeguards are necessary to prevent malicious actors from exploiting this technology.

Also, with the increasing prevalence of deepfake technologies, audiences may find it harder to trust video content, even when it’s authentic. This erosion of trust could have long-term implications for journalism, social media, and interpersonal relationships.

Conclusion

AI lip syncing technology represents a significant milestone in AI-based video manipulation, offering unparalleled lip-syncing accuracy through its integration of advanced diffusion models and temporal alignment techniques. Its potential applications span diverse fields, from entertainment and marketing to education and beyond.

But it’s not perfect. It struggles a bit with fast speech, busy backgrounds, or when you’re using less powerful hardware. And let’s not ignore the elephant in the room—deepfake misuse. If this kind of tech falls into the wrong hands, it could cause serious issues, from fake news to invasion of privacy.

That said, with the right safeguards and improvements, AI lipsyncing could change how we think about video production. It’s not just about fixing problems or saving time; it’s about giving creators new ways to tell their stories, share ideas, and connect with people.

Authored By:

Ana Weissman

Ana WeissmanProduct Manager @ Nectar AI

Ana manages the product operations and roadmap for a variety of products at Nectar. Her career experience spans Amazon, Hitachi, and Pinterest, showcasing her knack for innovation and strategic product development. Her experience reflects a blend of technical expertise and market acumen, especially in the adult space driving impactful solutions in our products.